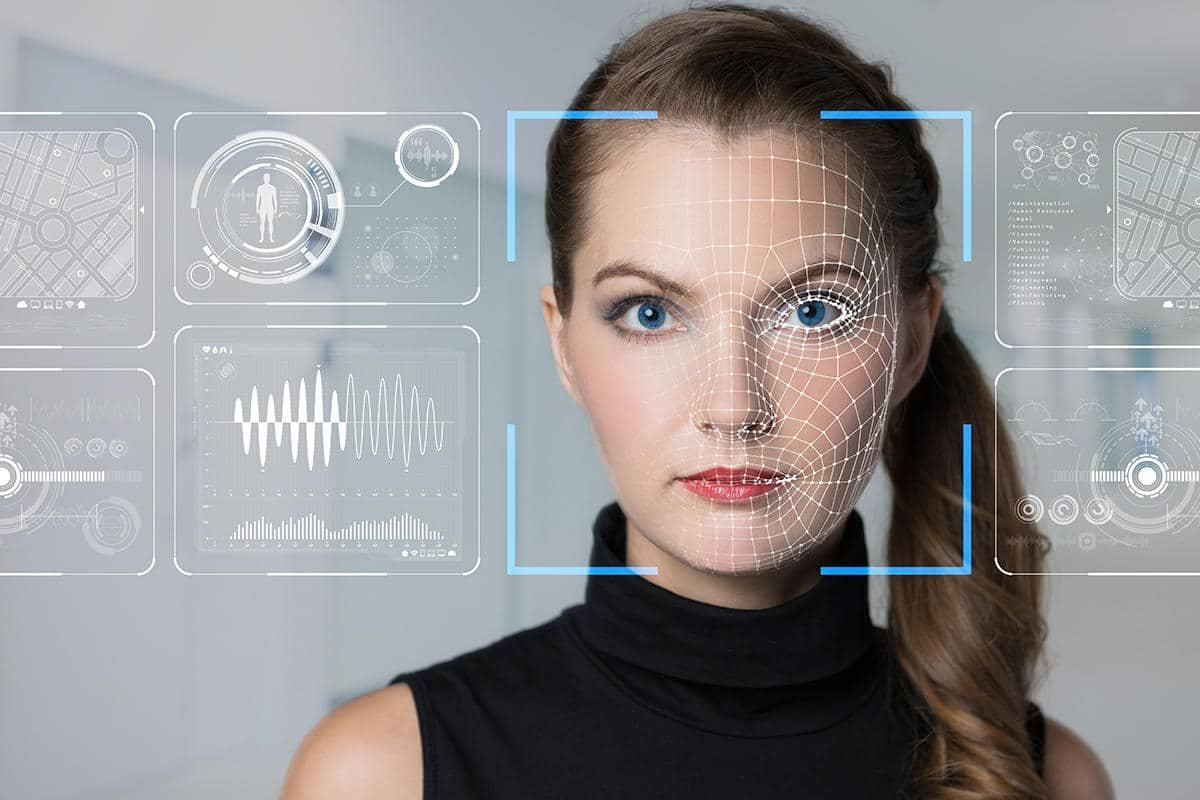

Deepfakes are fake videos, photos, or audio made using smart computer programs. These tools can copy a real person’s face or voice. The result looks real, but it’s not. This kind of media is called synthetic media. In Pakistan, fake videos are being used to trick people or damage reputations.

In 2025, during a conflict with India, a fake video of General Ahmed Sharif Chaudhry went viral. The clip showed him saying Pakistan lost military jets. But it was a deepfake. The video was shared almost 700,000 times before it was proven fake. The goal was to confuse people and spread fear.

Image Credit: Belling Cat

Who Is Being Targeted in Pakistan?

In 2023, PTI used an AI-made voice of Imran Khan for a rally. He was in jail at that time. The voice sounded like his and was used in a video to give a speech. Many people believed it was real.

Image Credit: Forbes

In 2024, a fake video of Punjab Minister Azma Bukhari appeared online. It was edited to harm her image. She said she felt broken and took the case to court.

Actress Hania Aamir was also targeted. A deepfake video of her in an inappropriate scene went viral. She spoke out against it and asked the government to take action.

Image Credit: Tribune

Some fake videos showed Pakistani generals saying things they never did. Others showed world leaders like Donald Trump making threats against Pakistan. All of these were made using deepfake technology. They fooled many people before being proven false.

Why Deepfakes Are a Big Problem?

Many people in Pakistan use social media, but they often do not know how to verify if a video is fake. A study has shown that deepfakes are most effective when people already trust the message. It’s even worse when there’s political tension or hate online. These fake videos are causing people to lose trust in the news, leaders, and even each other. This is dangerous for the country.

You May Like To Read: How AP News Paints Only the Dark Side of Pakistan?

How Can We Spot Fake Videos?

Some groups in Pakistan are working on ways to find fake videos. They are using online tools to verify if a video has been edited. One tool is called InVID, which checks video info. Another tool, Forensically, looks for image problems. India has developed a tool called Vastav.AI to detect deepfakes. Pakistan could build a similar one. Some universities are also helping, but more support is needed from the government.

What Should the Public Do?

People in Pakistan must learn to distinguish between real and fake. Schools should teach kids to ask questions when they see videos online. Colleges should offer digital safety classes. Parents and teachers should guide young people on what to believe and what to ignore. Most people share videos without checking. That has to stop. Pakistan’s cyber law, PECA, does not cover deepfakes yet. Experts say it must be updated. AI is advancing rapidly, and our laws must keep pace.

Social media companies should also help. They should mark AI-made content and block fake accounts. Fact-checkers should work faster and be easier to find.

What Pakistan Must Do?

Deepfakes are more than just fake videos—they are a serious threat to Pakistan’s safety, peace, and trust. These AI-generated clips can appear and sound so realistic that many people believe them without questioning their authenticity. They have been used to target leaders, judges, journalists, and even actresses like Hania Aamir. Some deepfakes were shared during elections, confusing voters and spreading fear. Others attempted to instigate conflicts between countries or erode public trust in the army and courts.

You May Like To Read: Digital Propaganda & Conspiracy Theories: Psychology and Persuasion (IW3)

If we don’t act fast, deepfakes will continue to harm people and damage Pakistan’s future. That’s why everyone—government, schools, parents, and media—must work together. We need strong laws that ban deepfakes made to trick or harm others. The government must update the cyber law (PECA) to include new AI risks. Schools should teach children how to verify the authenticity of videos and audio. TV, radio, and newspapers must also tell people how deepfakes work and why they are dangerous.

Social media companies must help too. They should label AI content and block fake accounts. Fact-checking websites should get more support and be shared widely on WhatsApp, X, and Facebook. Most of all, every person must think before they share. Ask: Is this real? Where did it come from? Can I trust this?

Pakistan’s best protection is its people. A smart, alert public can stop fake news before it spreads. In today’s digital war, truth is our strongest shield.